Can You Close the Performance Gap Between GPU and CPU for Deep Learning Models? - Deci

Por um escritor misterioso

Descrição

How can we optimize CPU for deep learning models' performance? This post discusses model efficiency and the gap between GPU and CPU inference. Read on.

Tinker-HP: Accelerating Molecular Dynamics Simulations of Large Complex Systems with Advanced Point Dipole Polarizable Force Fields Using GPUs and Multi-GPU Systems

Webinar: Can You Close the GPU and CPU Performance Gap for CNNs?

Deci simplifies deep learning apps on CPUs

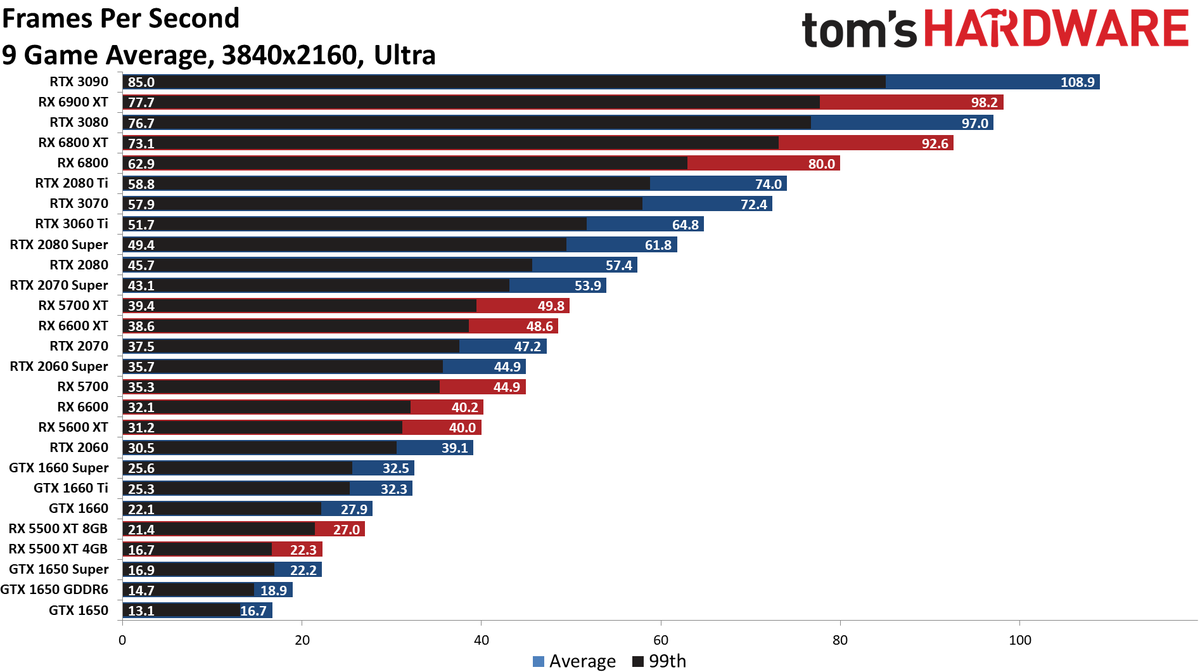

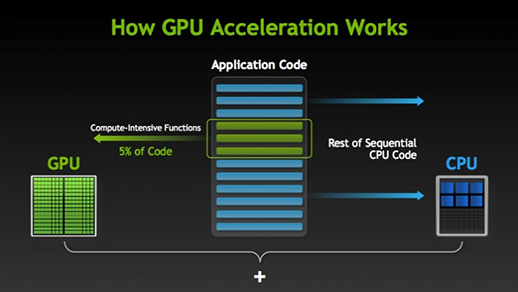

Hardware for Deep Learning. Part 3: GPU, by Grigory Sapunov

Nvidia, Microsoft Open the Door to Running AI Programs on Windows PCs

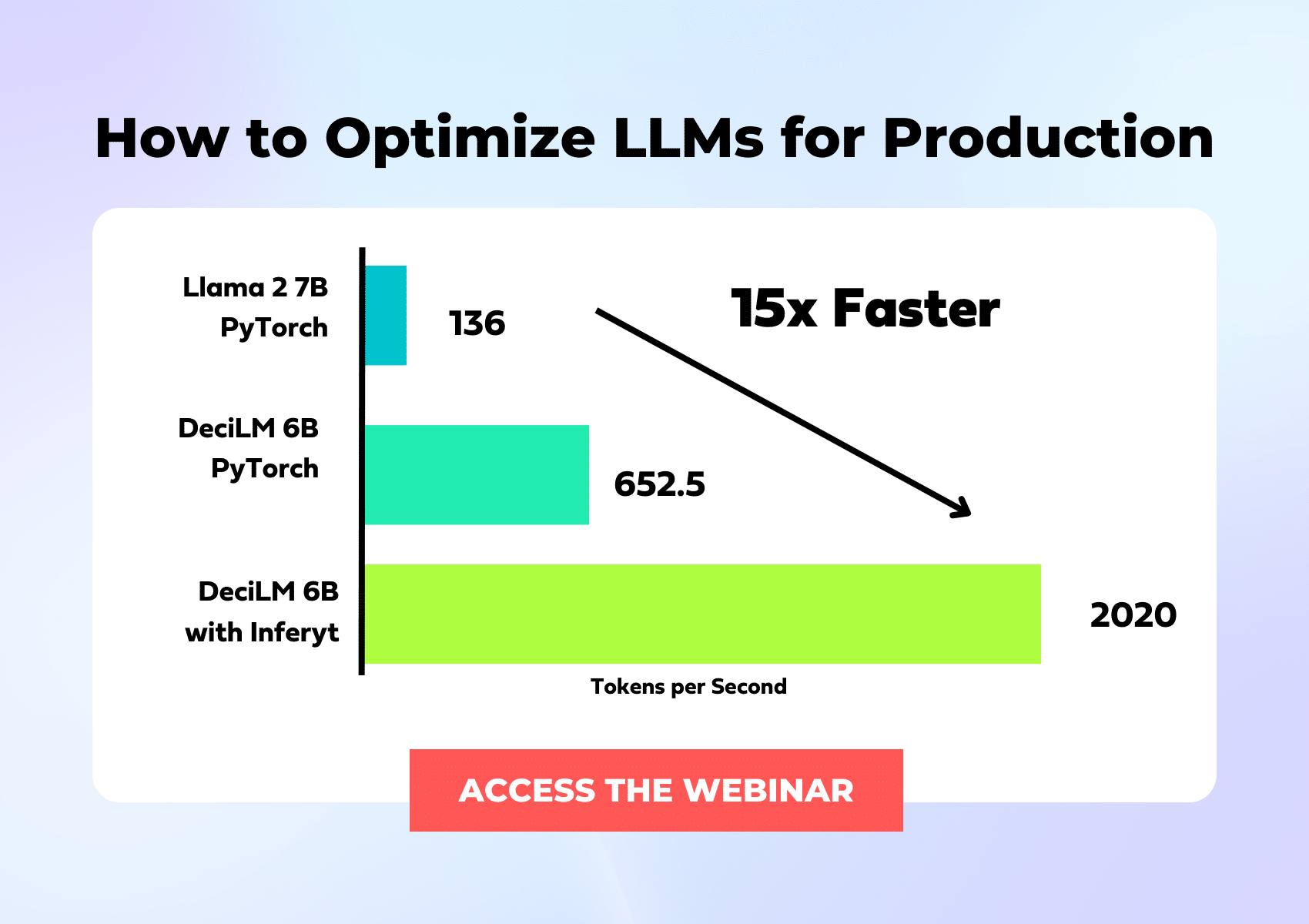

Deci Launches New Version of Its Deep Learning Platform Supporting Generative AI Model Optimization

Why GPUs are more suited for Deep Learning? - Analytics Vidhya

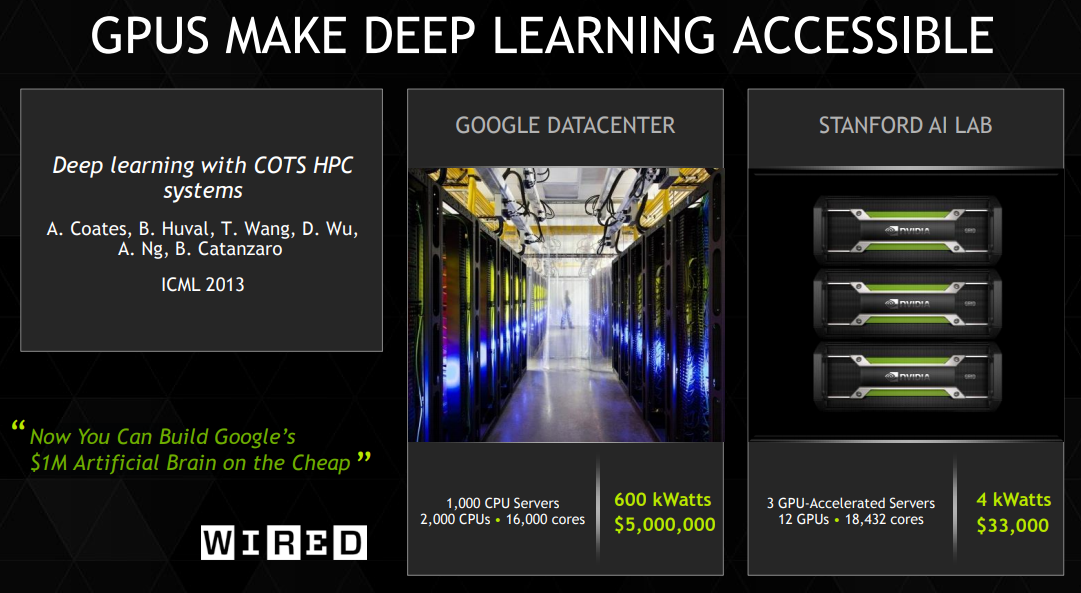

Why GPUs for Deep Learning? A Complete Explanation

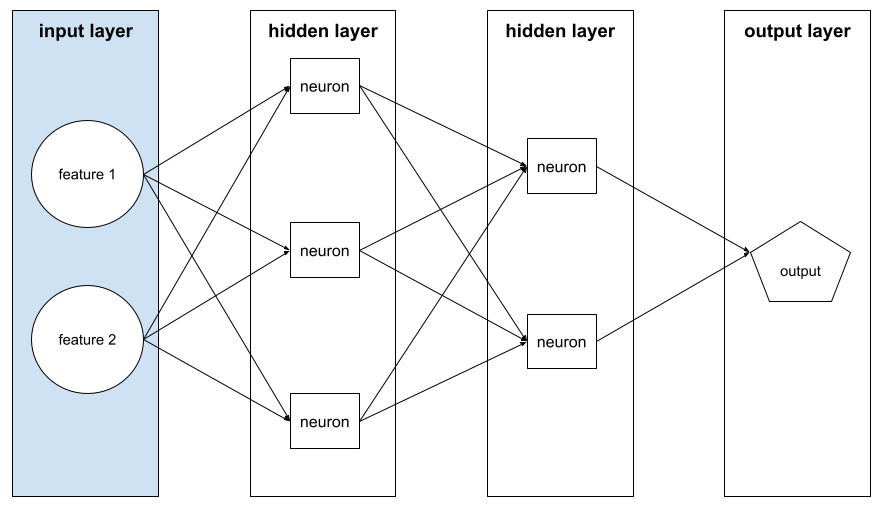

Machine Learning Glossary

Does a CPU/GPU's performance affect a machine learning model's accuracy? - Quora

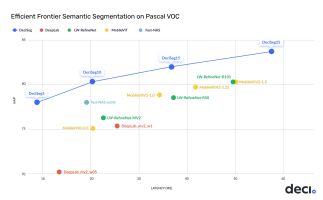

Deci Advanced semantic segmentation models deliver 2x lower latency, 3-7% higher accuracy

Webinar: Can You Close the GPU and CPU Performance Gap for CNNs?

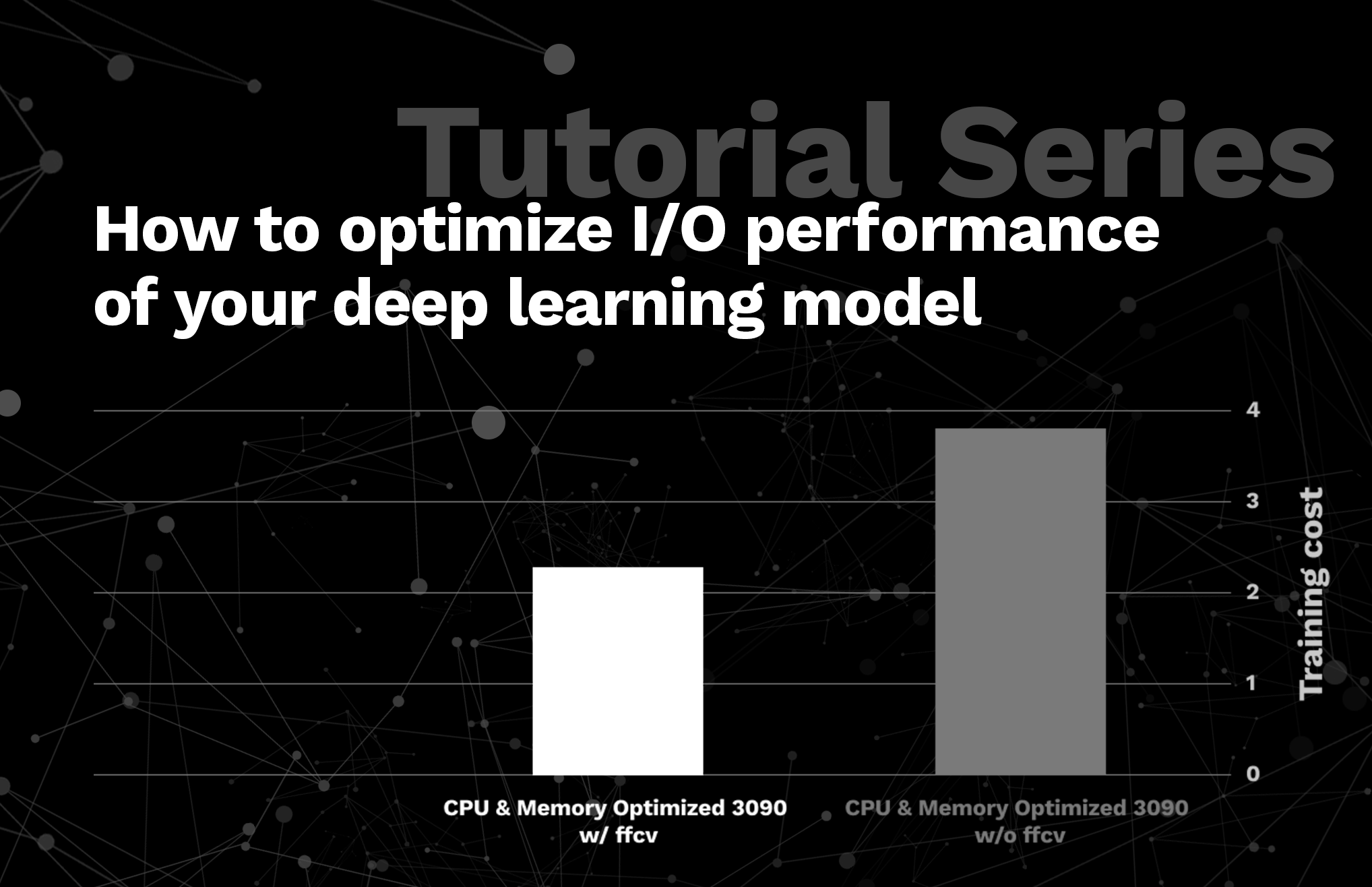

Tutorial Series: How to optimize I/O performance of your deep learning model

Israeli startup Deci lands $9.1M using AI to train AI to be its best self

Is there a way to Train simultaneously on CPU and GPU? (e.g. 2 separate neural network models) - Quora

de

por adulto (o preço varia de acordo com o tamanho do grupo)